Sparse large language models (LLMs) based on the Mixture of Experts (MoE) framework have gained traction for their ability to scale efficiently by activating only a subset of parameters per token. This dynamic sparsity allows MoE models to retain high representational capacity while limiting computation per token. However, with their increasing complexity and model size approaching trillions of parameters, training them efficiently requires algorithmic innovation and a tightly integrated hardware-software optimization. These challenges are especially relevant when deploying models on non-standard AI accelerators like Ascend NPUs, which require specific architectural alignment to deliver optimal performance.

A major technical challenge lies in the inefficient utilization of hardware resources while training sparse LLMs. Since only a portion of parameters are active for each token, workloads across devices become unbalanced, leading to synchronization delays and underused processing power. This imbalance also affects memory utilization as different experts process different numbers of tokens, sometimes exceeding capacity. These inefficiencies are compounded at a large scale, such as across thousands of AI chips, where communication and memory management bottlenecks significantly hinder throughput. The inability to fully harness the computational promise of sparsity in practice restricts the deployment of such models on hardware systems like Ascend NPUs.

Several strategies have been proposed to tackle these challenges. These include auxiliary losses to balance token distribution across experts and drop-and-pad strategies that limit expert overload by discarding tokens exceeding capacity. However, these techniques either reduce model performance or introduce inefficiencies in memory and computation. Other efforts include heuristic expert placement and traditional communication patterns like All-to-All dispatching, but these often fail to scale well or maintain high throughput. Moreover, standard memory-saving techniques like recomputation are usually coarse-grained, targeting whole layers instead of specific operations, leading to increased runtime without proportional memory savings.

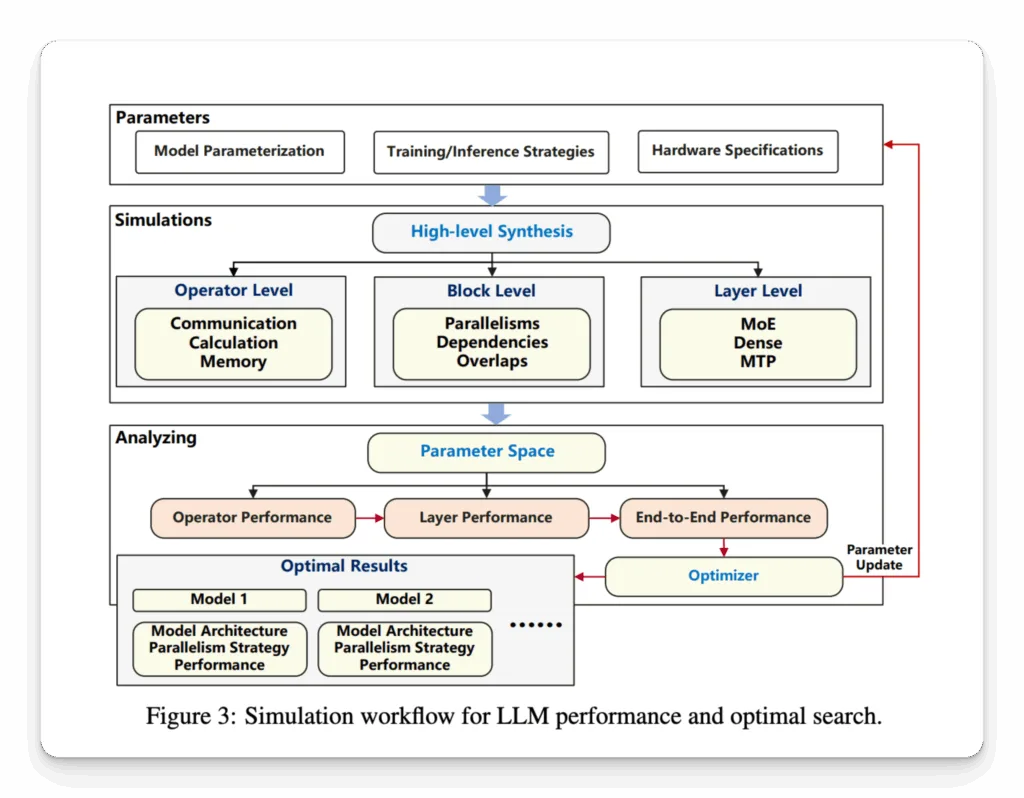

Researchers from the Pangu team at Huawei Cloud introduced a highly structured and optimized training approach for large MoE models tailored to Ascend NPUs. They developed Pangu Ultra MoE, a sparse LLM with 718 billion parameters, focusing on aligning model architecture and system design with the capabilities of the Ascend hardware. Their approach begins with a simulation-based model configuration process that evaluates thousands of architecture variants using metrics grounded in actual hardware behavior. These simulations inform design decisions before any physical training is undertaken, thus saving substantial computational resources and enabling informed tuning of model hyperparameters.

The simulation method analyzes combinations of parameters such as the number of layers, hidden size, and expert count using a five-dimensional parallelism strategy that includes Pipeline Parallelism, Tensor Parallelism, Expert Parallelism, Data Parallelism, and Context Parallelism. The final model configuration adopted by Huawei included 256 experts, a hidden size 7680, and 61 transformer layers. To further optimize performance, researchers integrated an Adaptive Pipe Overlap mechanism to mask communication costs and used hierarchical All-to-All communication to reduce inter-node data transfer. They employed fine-grained recomputation, such as recomputing only key-value vectors in attention modules, and introduced tensor swapping to offload activation memory to host devices dynamically.

Pangu Ultra MoE achieved a Model Flops Utilization (MFU) of 30.0% and processed tokens at a rate of 1.46 million per second using 6,000 Ascend NPUs. The baseline MFU was 18.9% with 0.61 million tokens per second on 4,000 NPUs. The researchers also introduced dynamic expert placement strategies, improving device-level load balance and achieving a relative 10% MFU improvement. The model performed competitively on benchmark evaluations, attaining 81.3% on AIME2024, 97.4% on MATH500, 94.8% on CLUEWSC, and 91.5% on MMLU. In the healthcare domain, it outperformed DeepSeek R1 by scoring 87.1% on MedQA and 80.8% on MedMCQA, confirming its strength in domain-specific applications.

This study illustrates how the Pangu team at Huawei effectively tackled the core difficulties of training massive MoE models on specialized hardware. Their systematic architecture search, efficient communication techniques, and tailored memory optimizations represent a strong framework for scalable AI training. The work demonstrates practical ways to unlock the performance potential of sparse models and sets a direction for future system-aware AI design.

Check out Paper here. All credit for this research goes to the researchers of this project. Also, feel free to follow us on Twitter and don’t forget to join our 95k+ ML SubReddit.

Here’s a brief overview of what we’re building at Marktechpost:

Nikhil is an intern consultant at Marktechpost. He is pursuing an integrated dual degree in Materials at the Indian Institute of Technology, Kharagpur. Nikhil is an AI/ML enthusiast who is always researching applications in fields like biomaterials and biomedical science. With a strong background in Material Science, he is exploring new advancements and creating opportunities to contribute.